Humanity has gone through history with several major engineering breakthroughs, increasing our productivity and extending or physical abilities by steam-power, diesel, and electric power. From the first industrial revolution, giving us steam-power, to the third, giving us the computer and the internet.

Artificial intelligence (AI) may well be the greatest technological revolution yet, a paradigm shift. For one simple reason, previous breakthroughs have all affected our physical ability, A.I would instead replace our cognitive ability. And, a lot of jobs are likely to disappear because of this.

The concept of artificial intelligence appeared for the first time more than 60 years ago, although thinking machines or robots had long formed an own genre in science fiction. When the term was coined, it was only a few years since the British mathematician Alan Turing invented the first computer and basically, today’s computers. Albeit infinitely more powerful, today’s computers are still basically just improvements of the machine invested by Turing. Computers do not think by themselves, they follow our commands through coding.

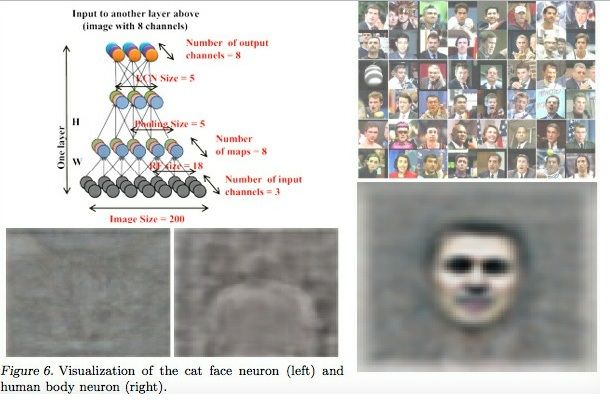

A.I. and specifically Artificial general intelligence (AGI), or ‘strong A.I.’ is very different. Here, the software will simulate human intelligence, the machines will be self-taught. It will develop and improve themselves by observation, trial and error, and communication.

Whether AGI would develop consciousness or self-awareness, is a good question. We commonly see AGI associated with traits such as consciousness, sentience, sapience, and self-awareness observed in living beings in science fiction. But that AGI would imply that a computer behaves as intelligently as a person must also necessarily have a mind and consciousness, is unclear. AGI refers only to the amount of intelligence that the machine displays, with or without a mind.

Some has raised the dystopian nightmare of a computer developing so fast, that it would very soon develop god-like abilities and technology, beyond human comprehension. That the ability to reprogram and improve itself – a feature called “recursive self-improvement”, would make it better at improving itself, it would probably continue doing so in a rapidly increasing cycle, leading to an intelligence explosion and the emergence of superintelligence.

“The coming of computers with true humanlike reasoning remains decades in the future, but when the moment of “artificial general intelligence” arrives, the pause will be brief. Once artificial minds achieve the equivalence of the average human IQ of 100, the next step will be machines with an IQ of 500, and then 5,000. We don’t have the vaguest idea what an IQ of 5,000 would mean. And in time, we will build such machines–which will be unlikely to see much difference between humans and houseplants.”

– David Gelernter, attributed, “Artificial intelligence isn’t the scary future. It’s the amazing present.”, Chicago Tribune, January 1, 2017

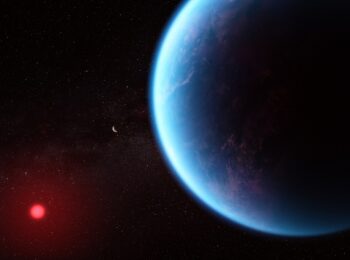

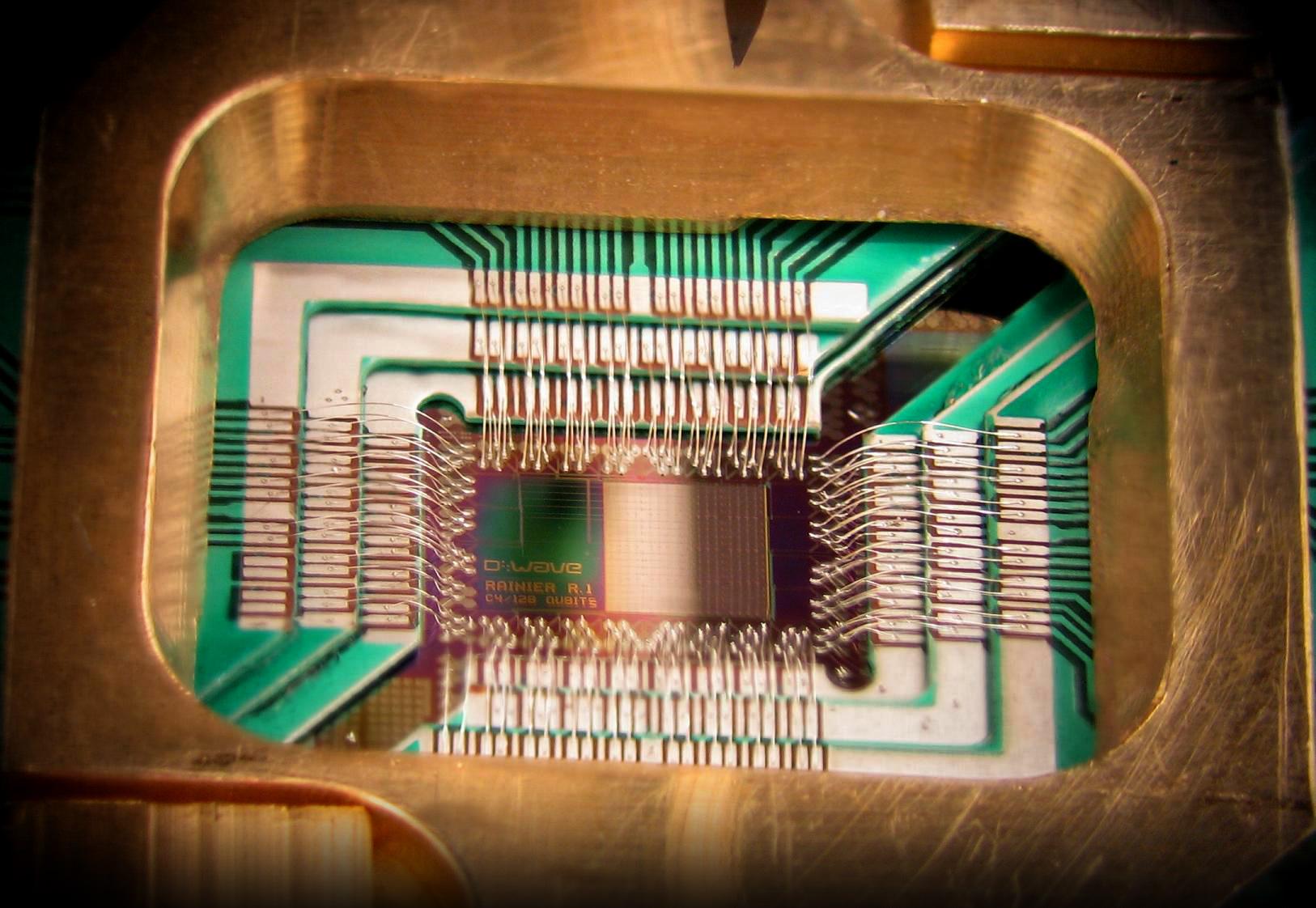

We are yet to arrive at AGI yet, although much research is done all over the world. Much research is also invested in quantum computing, which may still be nascent, but if realized, they would be exponentially more powerful than today’s computers. Quantum computers would allow artificial intelligence, big data, and machine learning to become far more advanced.

In the case of ‘Weak artificial intelligence’ (weak A.I.), also known as ‘narrow A.I.’, however, there have been many examples of A.I. available to consumers for several years, for example, the personal assistants in smartphones. A.I is also used for social networking, often to clean up false news, racism, and terrorism.

“Weak or “narrow” AI, in contrast, is a present-day reality. Software controls many facets of daily life and, in some cases, this control presents real issues. One example is the May 2010 “flash crash” that caused a temporary but enormous dip in the market.”

— Ryan Calo, Center for Internet and Society, Stanford Law School, 30 August 2011.

There are many who point out that A.I. will improve society and facilitate people’s everyday lives and that the jobs that disappear will be replaced by alternatives. Many others are worry and see the technological development as a pure dystopia.

But these are only qualified guesses and none knows for sure how the A.I revolution will impact society and humanity. What can be said with certainty is that many companies are attracted to the idea of a 24-hour employee, never sick and with no vacation.

There is little to learn from previous automation breakthroughs, since creative destruction has been a very real aspect, with some jobs disappearing due to the invention of a superior technology, with new sectors in the economy being created replacing the old jobs.

As for the A.I revolution, one can only hope that companies are looking beyond profitability, because unemployment due to A.I, known as technological unemployment, may lack the “creative” part of creative destruction.

What distinguishes A.I from other technology development, such as when the robots were introduced into the manufacturing industry, is the cognitive aspect.

With A.I. support, many professional jobs that today require a university degree can also be automated, take JP Morgans A.I. software COIN, which with text-analysis can do the same job as it takes a business lawyer 360,000 working hours to do (to review business deals) in just a couple of seconds.

Perhaps we should have a broad discussion about its aspects, political, philosophical, and even religious. Few people have knowledge about what opportunities that this new technology can offer, even fewer are aware of its risks. But there will definitely be both positive and negative consequences.

One thing is sure, innovation cannot be stopped, and based on prior experience, new technologies can be used to create growth. There is no doubt that technology can replace jobs, but it can also free up resources.

“What we should more concerned about is not necessarily the exponential change in artificial intelligence or robotics, but about the stagnant response in human intelligence.”

– Anders Sorman-Nilsson, “Will Artificial Intelligence Take Our Jobs? We Asked A Futurist”, Huffington Post, February 16, 2017